Theories

Digital Marketing Theories That Drive Smarter Growth

The Magic Logix Theories hub is your go-to source for expert insights and forward-thinking digital marketing strategies. From foundational concepts like push vs. pull marketing to cutting-edge topics such as AI in marketing and SEO best practices, our thought leadership content is designed to help businesses understand what works in today’s digital landscape and why. Dive into proven theories that blend data, technology, and creativity to elevate your online presence and fuel measurable growth.

Featured Post

What Makes A Viral Video: 2026 Success Formula

Paid Search Management: A Guide to True Profitability

Update

How to Advertise on Reddit: An SMB & Enterprise Guide

You’ve built repeatable playbooks for Google, Meta, and LinkedIn. Those channels feel legible. You know how to structure tests, where to look when performance slips,

What Makes A Viral Video: 2026 Success Formula

Most videos don’t fail because the idea was bad. They fail because the launch was weak. That’s the part many overlook when they ask what

Paid Search Management: A Guide to True Profitability

You launch a paid search campaign, add budget, and expect the math to improve. Instead, the dashboard gets noisier. Click costs creep up. ROAS looks

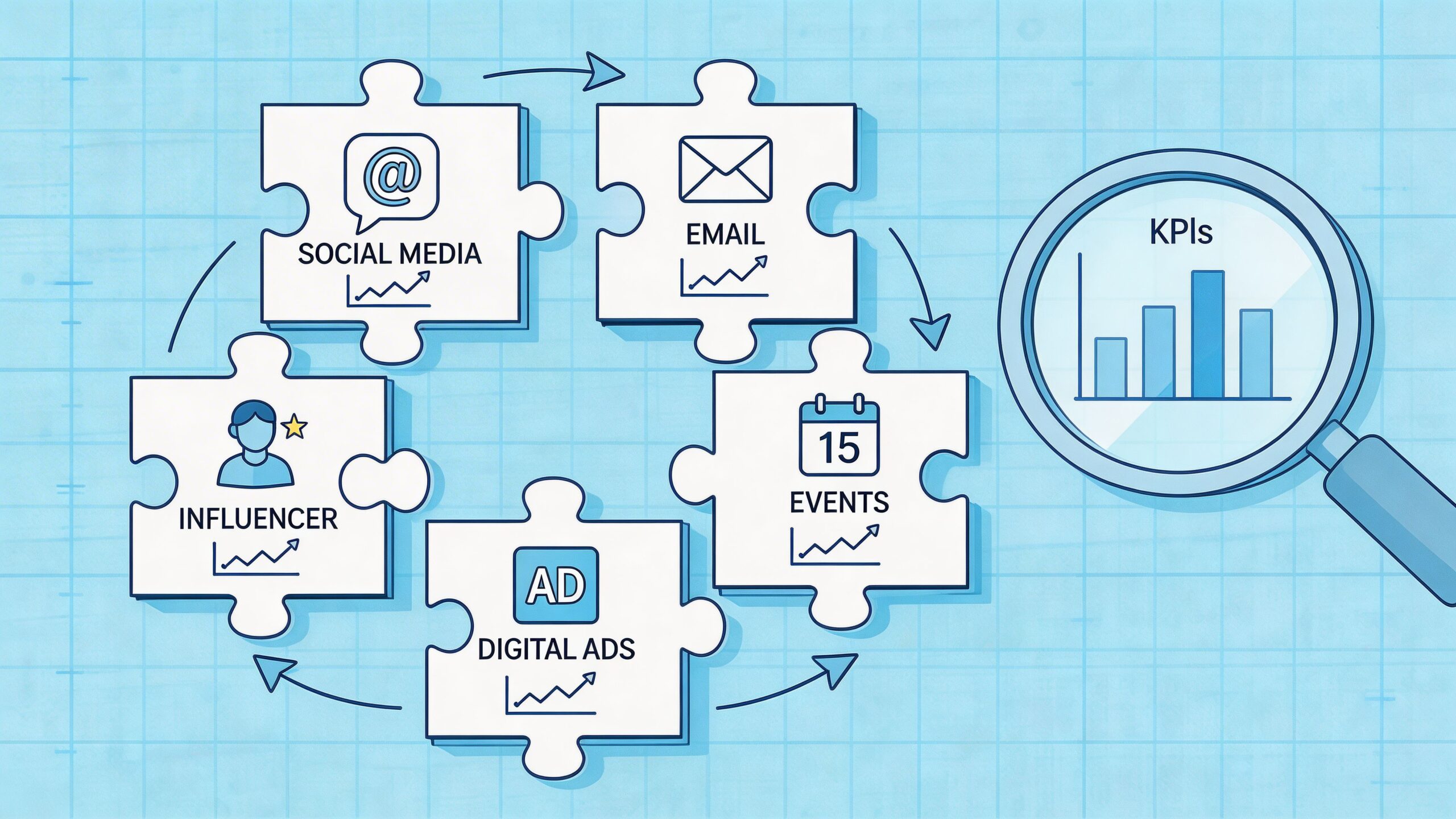

10 Integrated Marketing Campaign Examples for 2026

A consistent pattern shows up in many of the best integrated marketing campaign examples. The campaigns people remember most did not win because they were

Healthcare B2B Marketing: The Complete Guide for 2026

Your team launched a campaign for a strong healthcare product. The messaging was polished. The landing page looked credible. Sales expected demos. Then almost nothing

Small Business Tech Solutions: The 2026 Growth Guide

If your business still runs on a patchwork of spreadsheets, text threads, sticky notes, and memory, you are not behind because you lack ambition. You

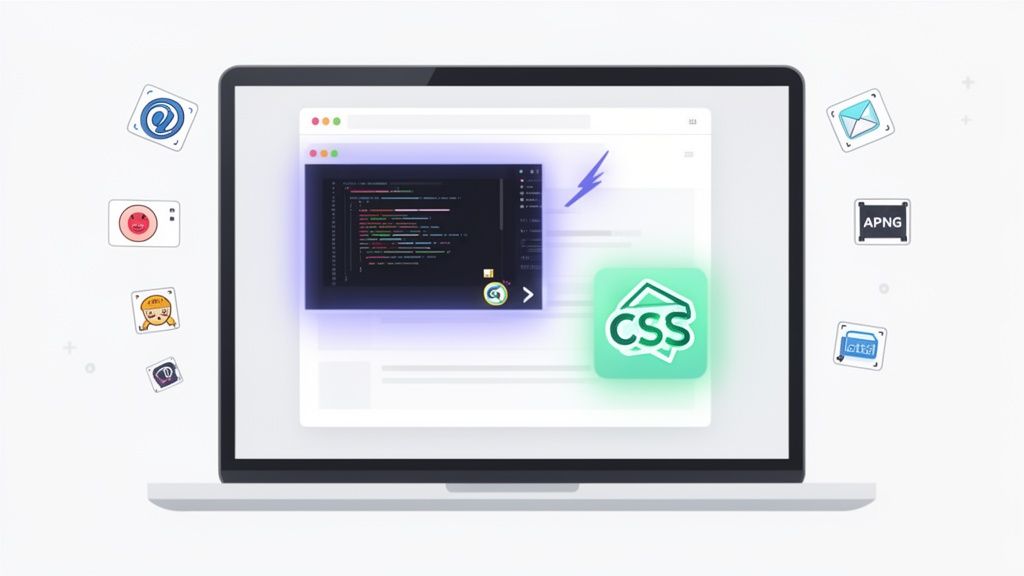

A Guide to Animations for Emails That Convert

Animations in an email are a double-edged sword. On one hand, a well-placed GIF or a slick CSS effect can stop a subscriber mid-scroll and

Content Marketing Dallas: Drive Growth

In the sprawling Dallas-Fort Worth metroplex, getting noticed can feel impossible. Trying to cut through the noise with traditional advertising is like shouting into the

10 Media Planning Examples to Master in 2026

Media planning is more than just allocating budgets; it's the strategic backbone of every successful marketing campaign. Knowing where, when, and how to reach your

B2B Marketing Segmentation A Modern Guide

B2B marketing segmentation is simply the art of breaking down your massive business audience into smaller, more focused groups. It’s about recognizing that not all